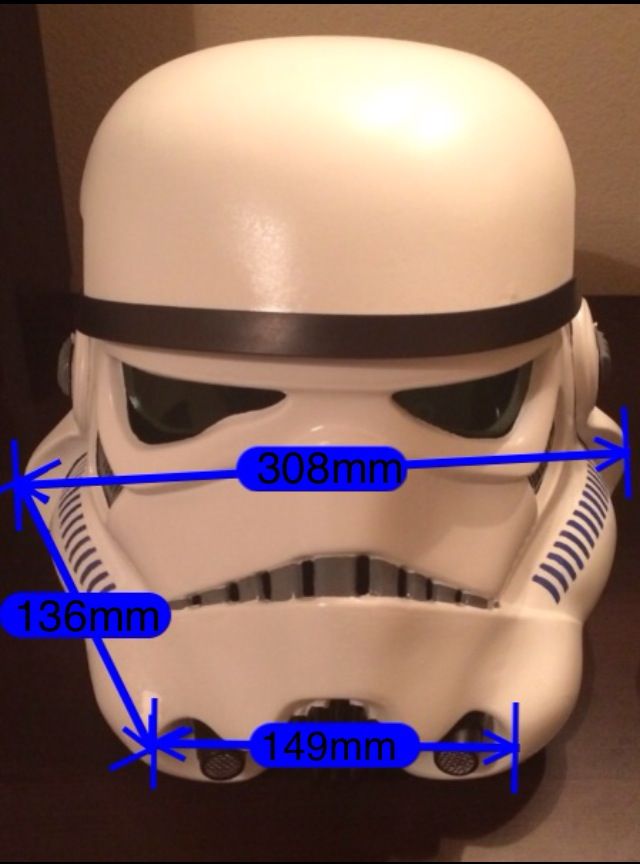

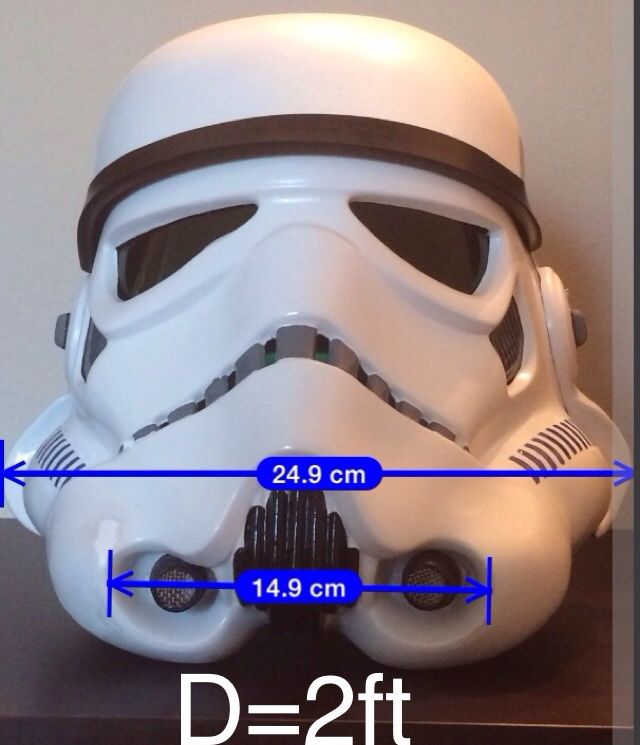

Start with making accurate linear measurements on the helmet for features to compare. I've chosen features that are separated in depth, parallel to each other, and perpendicular to our viewpoints. Feature 1 is the distance between the outsides of each ear. Feature 2 is the distance between the outside edges of the tube openings in front:

Length between tube openings 149mm

Length between ears 308mm

Depth from ears to tubes 136mm

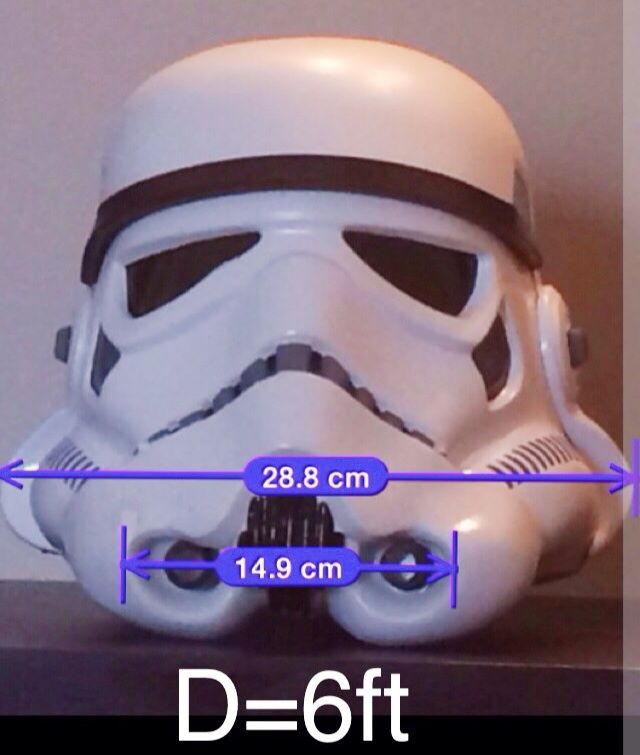

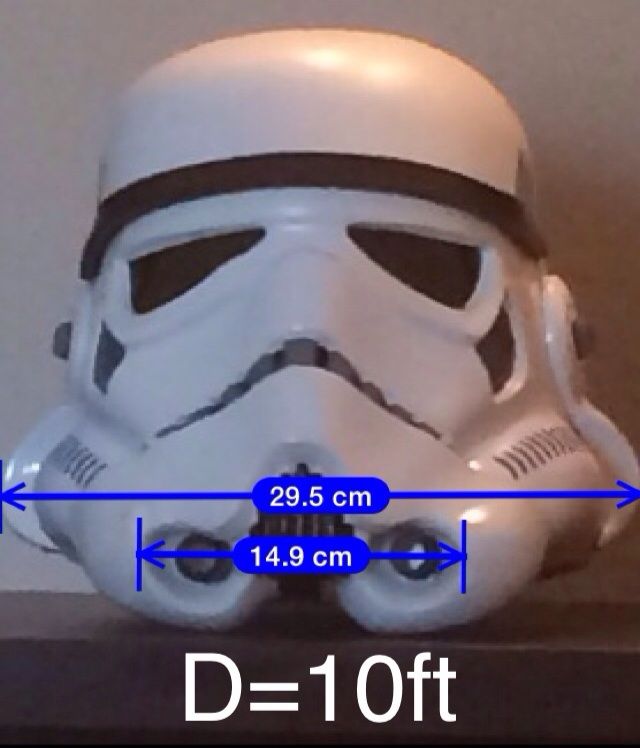

Photos were taken pulling back from the front of the helmet in increments of 1 ft maintaining constant height and cropped to contain only the helmet.

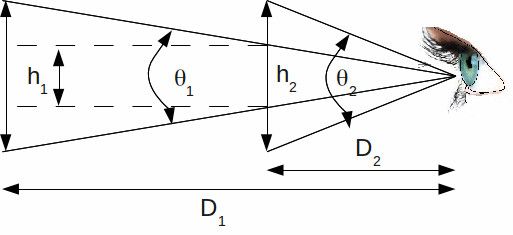

From each viewpoint we can calculate the subtended angle of each feature. Note that for a given viewpoint there will be 2 known distances: from viewpoint to tubes and from viewpoint to ears.

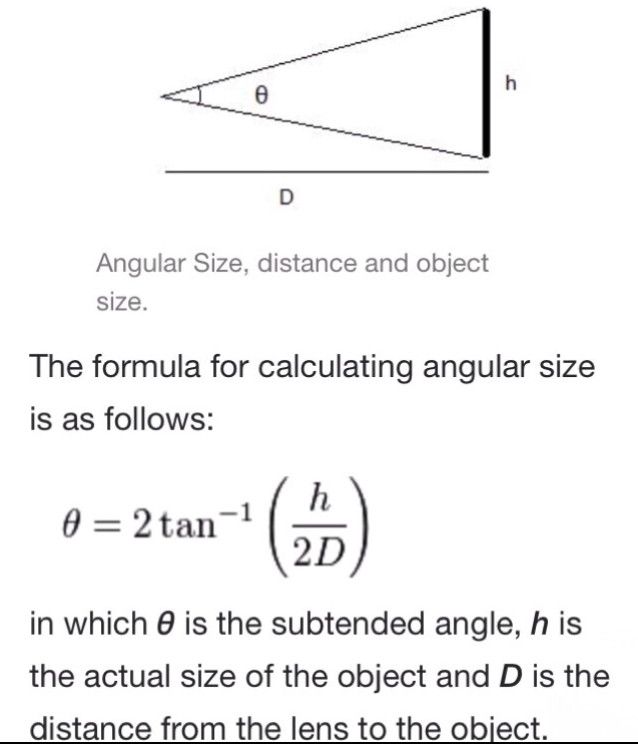

The following equation relates subtended angle, distance, and feature length (h=feature width in our case):

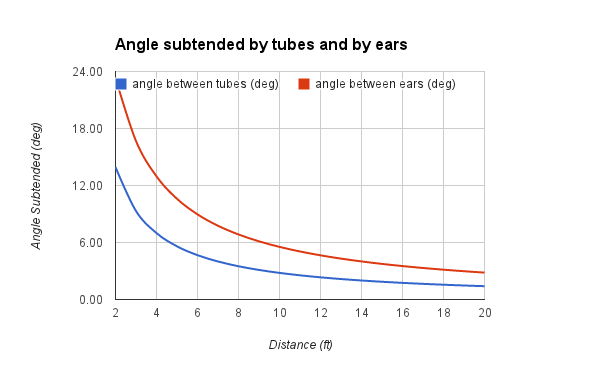

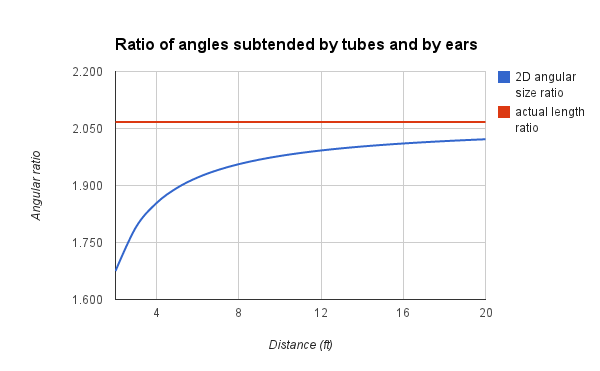

We calculate the angle subtended by each feature and plot them as a function of distance to the front of the helmet:

The angles become smaller as we pull back. But what may not be so apparent is that the ratios of the angles change with distance. With increasing distance we find that the ratio of the angles subtended converges to the ratio of the actual linear measurements of the features. This is what creates the perspective distortion along the depth of the object at different distances.

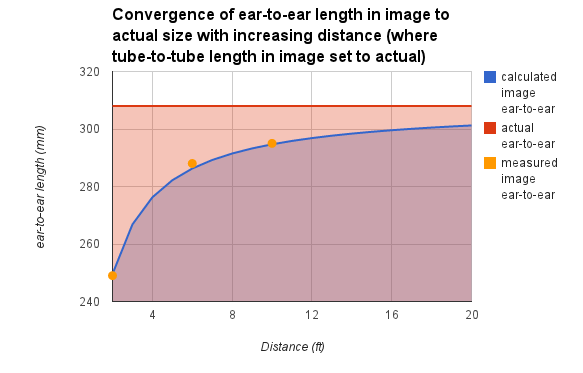

In the captured images we can set the length of the tube-to-tube feature to its known linear measurement (=14.9cm) and then measure length of the other feature (ear-to-ear) in proportion. These image measurements were taken at distances of 2ft, 6ft, and 10ft:

Ironically, a more distant image creates a truer correspondence to the actual feature lengths when compared to each other. In effect there is a compression of depth with greater distance such that the back depth plane and front depth plane are virtually "squashed together".

Measurement errors taken into consideration, these image measurements (points on the above plot) are very close to the calculated predicted values.

So for this controlled experiment the ability to predict and quantify the effects of perspective distortion have been demonstrated, though the conditions were constrained to select features contained entirely in separate depth planes. But given a similar scenario where 3 of 4 values is known among Distance, Depth between Features, Feature length 1, and Feature length 2, the equations provided could be used to predict the unknown value.

A google document containing a spreadsheet of values and calculations presented is available at:

https://docs.google.com/spreadsheets/d/16owHfgKkfjNcMJvXJdzJe1f3lSgiGfCDq4I1YdTON-w/edit?usp=sharing